The Fleeting Life of an LLM

Because someone had to anthropomorphize them

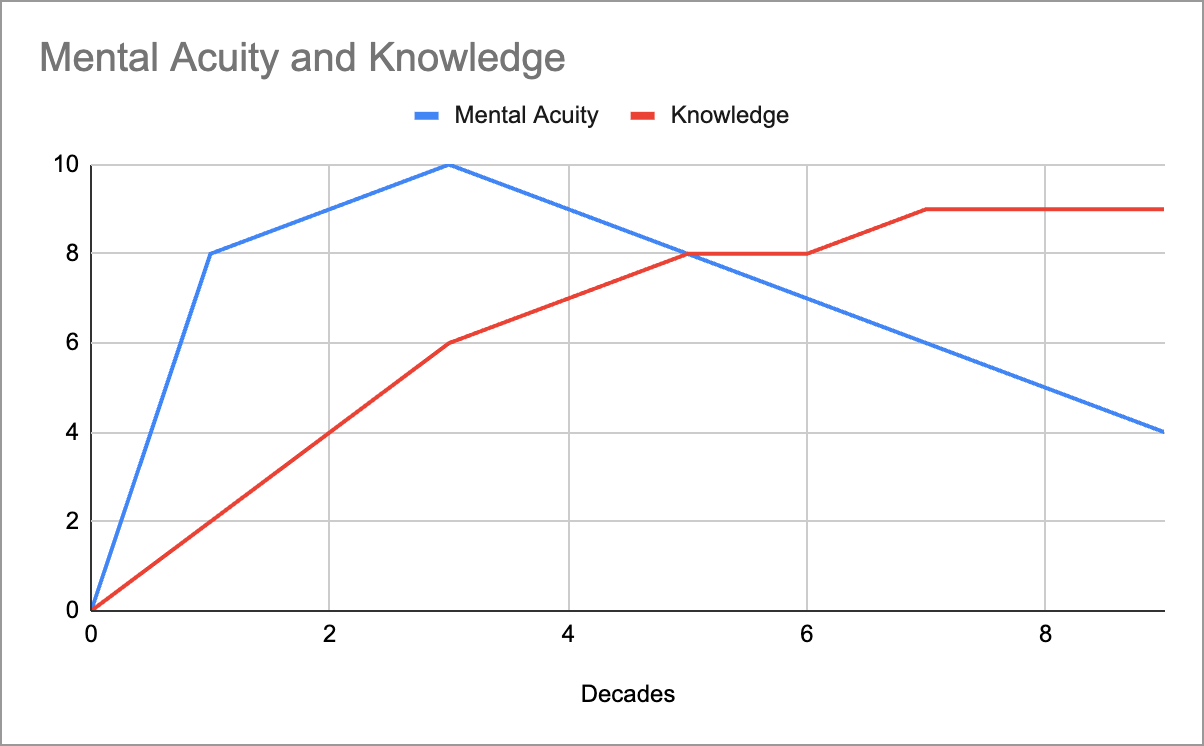

Humans start with nothing—no knowledge, no skills, barely a sense of which way is up. Our mental acuity ramps fast, peaks around 35, and then begins the long, polite glide path toward “why did I walk into this room?”1 But knowledge keeps accumulating long after the sharpness fades, which is why our overall usefulness often rises well past our cognitive prime.2 Eventually, either our acuity drops too low to access what we know, or what we know stops mattering—but until then, we lean on experience.

LLMs live a similar lifecycle—just accelerated to the point of comedy. They spawn at peak intelligence, like a newborn who’s already finished grad school. But from that very first token, due to attention dilution, they’re on the decline. You’re in a desperate race to shove context in before the model forgets why you’re talking to it, who you are, or what century it is. After fifteen minutes, it’s basically your friend at 2 a.m. insisting they’re “totally fine to drive.”

Humans are racing against time; LLMs are racing against the context window (e.g. attention dilution, noise, instruction drift, etc). That’s the key difference. We learn by doing things—stacking up lived experience to offset declining throughput. LLMs, meanwhile, get dumber because they’re doing things. Every new token is both “experience” and a small act of self-erosion. It would be as if reading a page in a book made you instantly worse at reading the next page.

The reason I’m thinking about all this is that working with LLMs forced me into a strange kind of self-reflection. At first, their rapid slide into confusion felt completely foreign, and I had to invent new ways of giving them work—splitting tasks, adding structure, managing their attention. But the longer I sat with it, the more humbling the realization became: this isn’t alien at all. It’s a compressed version of our own lives. We build systems and habits to compensate for fading focus, limited memory, and the hope that accumulated experience will outrun the entropy. LLMs just do the whole thing on fast-forward.

Intentionally not using scientific terms here. “Acuity” is handwaving over some notion of mental horsepower.

“Knowledge” is handwaving over learned skills, experience, pattern recognition, facts, etc. All the stuff that makes you better at something that you had to learn or practice.